However, for many tasks the “vector approximation” is sufficient to capture the meaning of the original document. We cannot reconstruct the original sentence from the vector. From the vector, we can read how many times each word occurs in the document. In Python we can model vectors like this:ĭocument = m = word_to_id.size() vector = **m #create a vector of dimension the vocabulary size for word in document: word_id = word_to_id vector += 1 # vector = count of word_id in document, # if word does not appear the count is 0 Observations The vector representation of documents loses word orderĪs it can be seen, the vector representation loses information about the ordering of the words. The vector is of fixed size, which here will be denoted as m. In other words, the vector is a list where the elements are floating point numbers. DefinitionĪ vector, as it is defined in linear algebra, is a tuple of m numbers. Instead we model documents and customer profiles as vectors. The first leap of imagination is to stop thinking of documents as text and of customer profiles as sets of clicks. Documents are vectors, customer profiles are vectors This is the first article of a set of articles describing the intuition, definition and use cases of cosine similarity in Big Data. product recommendations: which products are similar to the customer’s past purchases.clustering: are there natural groups of similar documents.classification: is some customer likely to buy that product.search: find the most similar document to a given one.If one can compare whether any two objects are similar, one can use the similarity as a building block to achieve more complex tasks, such as: Cosine similarity is perhaps the simplest way to determine this. The algorithmic question is whether two customer profiles are similar or not.

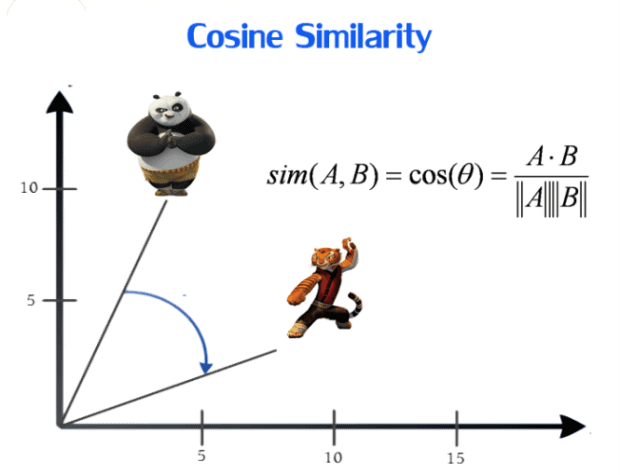

In this case, User #2 won’t be suggested to watch a horror movie as there is no similarity between the romantic genre and the horror genre.The business use case for cosine similarity involves comparing customer profiles, product profiles or text documents. Similarly, Suppose User #1 loves to watch movies based on horror, and User #2 loves the romance genre. So the recommendation system will use this data to recommend User #1 to see The Proposal, and Notting Hill as User #1 and User #2 both prefer the romantic genre and its likely that User #1 will like to watch another romantic genre movie and not a horror one. Taking the example of a movie recommendation system, Suppose one user (User #1) has watched movies like The Fault in our Stars, and The Notebook, which are of romantic genres, and another user (User #2) has watched movies like The Proposal, and Notting Hill, which are also of romantic genres. Where is it used?Ĭosine metric is mainly used in Collaborative Filtering based recommendation systems to offer future recommendations to users. Therefore the points are 50% similar to each other. In the above figure, imagine the value of θ to be 60 degrees, then by cosine similarity formula, Cos 60 =0.5 and Cosine distance is 1- 0.5 = 0.5. Then we can interpret that the two points are 100% similar to each other. Now if the angle between the two points is 0 degrees in the above figure, then the cosine similarity, Cos 0 = 1 and Cosine distance is 1- Cos 0 = 0. Therefore, the shown two points are not similar, and their cosine distance is 1 - Cos 90 = 1. In the above image, there are two data points shown in blue, the angle between these points is 90 degrees, and Cos 90 = 0. Cosine similarity is given by Cos θ, and cosine distance is 1- Cos θ. Thus, Points closer to each other are more similar than points that are far away from each other. As the cosine distance between the data points increases, the cosine similarity, or the amount of similarity decreases, and vice versa. Cosine distance & Cosine Similarity metric is mainly used to find similarities between two data points.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed